Citations not correlated with referee scores

August 19, 2020

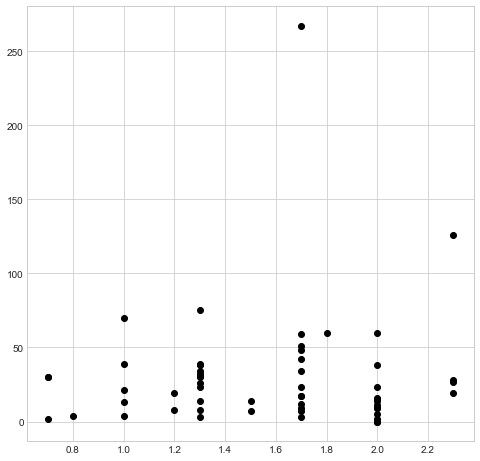

I logged into my easychair account, and downloaded the average referee scores for 184 accepted papers. These are taken from conferences (ICALP, STACS and LICS) where I was in the PC, so I had access to the scores for the papers. In all cases, the scores were on a scale of -3 to +3, so they can be compared. Next, for each paper I looked at the number citations, according to google scholar. Here is the result (y axis is citations and x axis is average referee score):

You will see that there does not seem to be any strong relationship between the two variables. In fact, the correlation is negative (-0.004539).

I understand that citation counts are a questionable metric, whose main advantage is that it can be counted and drawn in a scatter plot. Nevertheless, I think that these results are evidence for the fact that reviews, especially for conferences, do not reflect the value of the paper. My personal impression is that for conferences, higher scores are given for papers of the kind “we continue the work of x by solving problem y” and lower scores are given for papers of the kind “we introduce a new idea”. On the other hand, the impact (and therefore also citations) is greater for the second kind.

Of course I do not recommend to get rid of reviews, since there does not seem to be any better system.

Leave a Reply